The results of this new GSM-Symbolic paper aren’t completely new in the world of AI research. Other recent papers have similarly suggested that LLMs don’t actually perform formal reasoning and instead mimic it with probabilistic pattern-matching of the closest similar data seen in their vast training sets.

WTF kind of reporting is this, though? None of this is recent or new at all, like in the slightest. I am shit at math, but have a high level understanding of statistical modeling concepts mostly as of a decade ago, and even I knew this. I recall a stats PHD describing models as “stochastic parrots”; nothing more than probabilistic mimicry. It was obviously no different the instant LLM’s came on the scene. If only tech journalists bothered to do a superficial amount of research, instead of being spoon fed spin from tech bros with a profit motive…

It’s written as if they literally expected AI to be self reasoning and not just a mirror of the bullshit that is put into it.

Probably because that’s the common expectation due to calling it “AI”. We’re well past the point of putting the lid back on that can of worms, but we really should have saved that label for… y’know… intelligence, that’s artificial. People think we’ve made an early version of Halo’s Cortana or Star Trek’s Data, and not just a spellchecker on steroids.

The day we make actual AI is going to be a really confusing one for humanity.

…a spellchecker on steroids.

Ask literally any of the LLM chat bots out there still using any headless GPT instances from 2023 how many Rs there are in “strawberry,” and enjoy. 🍓

This problem is due to the fact that the AI isnt using english words internally, it’s tokenizing. There are no Rs in {35006}.

That was both hilarious and painful.

And I don’t mean to always hate on it - the tech is useful in some contexts, I just can’t stand that we call it ‘intelligence’.

LLMs don’t see words, they see tokens. They were always just guessing

To say it’s not intelligence is incorrect. It’s still (an inferior kind of) intelligence, humans just put certain expectations into the word. An ant has intelligence. An NPC in a game has intelligence. They are just very basic kinds of intelligence, very simple decision making patterns.

An NPC in a game has intelligence

By what definition of the word? Most dictionaries define it as some variant of ‘the ability to acquire and apply knowledge and skills.’

Of course there are various versions of NPCs, some stand and do nothing, others are more complex, they often “adapt” to certain conditions. For example, if an NPC is following the player it might “decide” to switch to running if the distance to the player reaches a certain threshold, decide how to navigate around other dynamic/moving NPCs, etc. In this example, the NPC “acquires” knowledge by polling the distance to the player and applies that “knowledge” by using its internal model to make a decision to walk or run.

The term “acquiring knowledge” is pretty much as subjective as “intelligence”. In the case of an ant, for example, it can’t really learn anything, at best it has a tiny short-term memory in which it keeps certain most recent decisions, but it surely gets things done, like building colonies.

For both cases, it’s just a line in the sand.

NPCs do not have any form of intelligence and don’t decide anything. Or is Windows intelligent cause I click an icon and it decides to do something?

To follow rote instructions is not intelligence.

If following a simple algorithm is intelligence, then the entire field of software engineering has been producing AI since its inception rendering the term even more meaningless than it already is.

Its almost as if the word “intelligence” has been vague and semi-meaningless since its inception…

Have we ever had a solid, technical definition of intelligence?

I’m pretty sure dictionaries have an entry for the word, and the basic sense of the term is not covered by writing up a couple of if statements or a loop.

Opponent players in games have been labeled AI for decades, so yeah, software engineers have been producing AI for a while. If a computer can play a game of chess against you, it has intelligence, a very narrowly scoped intelligence, which is artificial, but intelligence nonetheless.

https://www.etymonline.com/word/intelligence

Simple algorithms are not intelligence. Some modern “AI” we have comes close to fitting some of these definitions, but simple algorithms do not.

We can call things whatever we want, that’s the gift (and the curse) of language. It’s imprecise and only has the meanings we ascribe to it, but you’re the one who started this thread by demanding that “to say it is not intelligence is incorrect” and I’ve still have yet to find a reasonable argument for that claim within this entire thread. Instead all you’ve done is just tried to redefine intelligence to cover nearly everything and then pretended that your (not authoritative) wavy ass definition is the only correct one.

humans just put certain expectations into the word.

… which is entirely the way words work to convey ideas. If a word is being used to mean something other than the audience understands it to mean, communication has failed.

By the common definition, it’s not “intelligence”. If some specialized definition is being used, then that needs to be established and generally agreed upon.

I would put it differently. Sometimes words have two meanings, for example a layman’s understanding of it and a specialist’s understanding of the same word, which might mean something adjacent, but still different. For instance, the word “theory” in everyday language often means a guess or speculation, while in science, a “theory” is a well-substantiated explanation based on evidence.

Similarly, when a cognitive scientist talks about “intelligence”, they might be referring to something quite different from what a layperson understands by the term.

describing models as “stochastic parrots”

That is SUCH a good description.

If only tech journalists bothered to do a superficial amount of research, instead of being spoon fed spin from tech bros with a profit motive…

This is outrageous! I mean the pure gall of suggesting journalists should be something other than part of a human centipede!

Clearly this sort of reporting is not prevalent enough given how many people think we have actually come up with something new these last few years and aren’t just throwing shitloads of graphics cards and data at statistical models

i think it’s because some people have been alleging reasoning is happening or is very close to it

*starts sweating

Look at that subtle pixel count, the tasteful colouring… oh my god, it’s even transparent…

One time I exposed deep cracks in my calculator’s ability to write words with upside down numbers. I only ever managed to write BOOBS and hELLhOLE.

LLMs aren’t reasoning. They can do some stuff okay, but they aren’t thinking. Maybe if you had hundreds of them with unique training data all voting on proposals you could get something along the lines of a kind of recognition, but at that point you might as well just simulate cortical columns and try to do Jeff Hawkins’ idea.

LLMs aren’t reasoning. They can do some stuff okay, but they aren’t thinking

and the more people realize it, the better. which is why it’s good that a research like that from a reputable company makes headlines.

What about boobless?

Maggie’s boobs weighed [69] pounds which was [2], [2], [2] much, so she went down [51]st street to see Dr. [X}; after an [8] hour operation she was [flip calculator]

Is it sad that I still remember this calculator joke verbatim from middle school?

Wow, I’m impressed

Did anyone believe they had the ability to reason?

Yes

People are stupid OK? I’ve had people who think that it can in fact do math, “better than a calculator”

Like 90% of the consumers using this tech are totally fine handing over tasks that require reasoning to LLMs and not checking the answers for accuracy.

I still believe they have the ability to reason to a very limited capacity. Everyone says that they’re just very sophisticated parrots, but there is something emergent going on. These AIs need to have a world-model inside of themselves to be able to parrot things as correctly as they currently do (yes, including the hallucinations and the incorrect answers). Sure they are using tokens instead of real dictionary words, which comes with things like the strawberry problem, but just because they are not nearly as sophisticated as us doesnt mean there is no reasoning happening.

We are not special.

It’s an illusion. People think that because the language model puts words into sequences like we do, there must be something there. But we know for a fact that it is just word associations. It is fundamentally just predicting the most likely next word and generating it.

If it helps, we have something akin to an LLM inside our brain, and it does the same limited task. Our brains have distinct centres that do all sorts of recognition and generative tasks, including images, sounds and languge. We’ve made neural networks that do these tasks too, but the difference is that we have a unifying structure that we call “consciousness” that is able to grasp context, and is able to loopback the different centres into one another to achieve all sorts of varied results.

So we get our internal LLM to sequence words, one word after another, then we loop back those words via the language recognition centre into the context engine, so it can check if the words match the message it intended to create, it checks them against its internal model of the world. If there’s a mismatch, it might ask for different words till it sees the message it wanted to see. This can all be done very fast, and we’re barely aware of it. Or, if it’s feeling lazy today, it might just blurt out the first sentence that sprang to mind and it won’t make sense, and we might call that a brain fart.

Back in the 80s “automatic writing” took off, which was essentially people tapping into this internal LLM and just letting the words flow out without editing. It was nonesense, but it had this uncanny resemblance to human language, and people thought they were contacting ghosts, because obviously there has to be something there, right? But it’s not, it’s just that it sounds like people.

These LLMs only produce text forwards, they have no ability to create a sentence, then examine that sentence and see if it matches some internal model of the world. They have no capacity for context. That’s why any question involving A inside B trips them up, because that is fundamentally a question about context. "How many Ws in the sentence “Howard likes strawberries” is a question about context, that’s why they screw it up.

I don’t think you solve that without creating a real intelligence, because a context engine would necessarily be able to expand its own context arbitrarily. I think allowing an LLM to read its own words back and do some sort of check for fidelity might be one way to bootstrap a context engine into existence, because that check would require it to begin to build an internal model of the world. I suspect the processing power and insights required for that are beyond us for now.

If the only thing you feed an AI is words, then how would it possibly understand what these words mean if it does not have access to the things the words are referring to?

If it does not know the meaning of words, then what can it do but find patterns in the ways they are used?

This is a shitpost.

We are special, I am in any case.

It is akin to the relativity problem in physics. Where is the center of the universe? What “grid” do things move through? The answer is that everything moves relative to one another, and somehow that fact causes the phenomena in our universe (and in these language models) to emerge.

Likewise, our brains do a significantly more sophisticated but not entirely different version of this. There are more “cores” in our brains that are good at differen tasks that all constantly talk back and forth between eachother, and our frontal lobe provides the advanced thinking and networking on top of that. The LLMs are more equivalent to the broca’s area, they havent built out the full frontal lobe yet (or rather, the “Multiple Demand network”)

You are right in that an AI will never know what an apple tastes like, or what a breeze on its face feels like until we give them sensory equipment to read from.

In this case though, its the equivalent of a college student having no real world experience and only the knowledge from their books, lectures, and labs. You can still work with the concepts of and reason against things you have never touched if you are given enough information about them beforehand.

The two rhetorical questions in your first paragraph assume the universe is discrete and finite, and I am not sure why. But also, that has nothing to do with what we are talking about. You think that if you show the computers and brains work the same way(they don’t), or in a similar way(maybe) I will have to accept an AI can do everything a human can, but that is not true at all.

Treating an AI like a subject capable of receiving information is inaccurate, but I will still assume it is identical to a human in that regard for the sake of argument.

It would still be nothing like a college student grappling with abstract concepts. It would be like giving you university textbooks on quantum mechanics written in chinese, and making you study them(it would be even more accurate if you didn’t know any language at all). You would be able to notice patterns in the ways the words are placed relative to each other, and also use this information(theoretically) to make a combination of characters that resembles the texts you have, but you wouldn’t be able to understand what they reference. Even if you had a dictionary you wouldn’t be, because you wouldn’t be able to understand the definitions. Words don’t magically have their meanings stored inside, they are jnterpreted in our heads, but an AI can’t do that, the word means nothing to it.

What’s the strawberry problem? Does it think it’s a berry? I wonder why

I think the strawberry problem is to ask it how many R’s are in strawberry. Current AI gets it wrong almost every time.

That’s because they don’t see the letters, but tokens instead. A token can be one letter, but is usually bigger. So what the llm sees might be something like

- st

- raw

- be

- r

- r

- y

When seeing it like that it’s more obvious why the llm’s are struggling with it

Ask an LLM how many Rs there are in strawberry

not a problem limited to llms, they perfectly replicate my stupidity ;)

For reference Bing chat is still confidently sure there are 2

The tested LLMs fared much worse, though, when the Apple researchers modified the GSM-Symbolic benchmark by adding “seemingly relevant but ultimately inconsequential statements” to the questions

Good thing they’re being trained on random posts and comments on the internet, which are known for being succinct and accurate.

Yeah, especially given that so many popular vegetables are members of the brassica genus

Absolutely. It would be a shame if AI didn’t know that the common maple tree is actually placed in the family cannabaceae.

I think modern AI would know that though, since it follows almost immediately from Fermat’s Little Theorem.

Definitely true! And ordering pizza without rocks as a topping should be outlawed, it literally has no texture without it, any human would know that very obvious fact.

So do I every time I ask it a slightly complicated programming question

And sometimes even really simple ones.

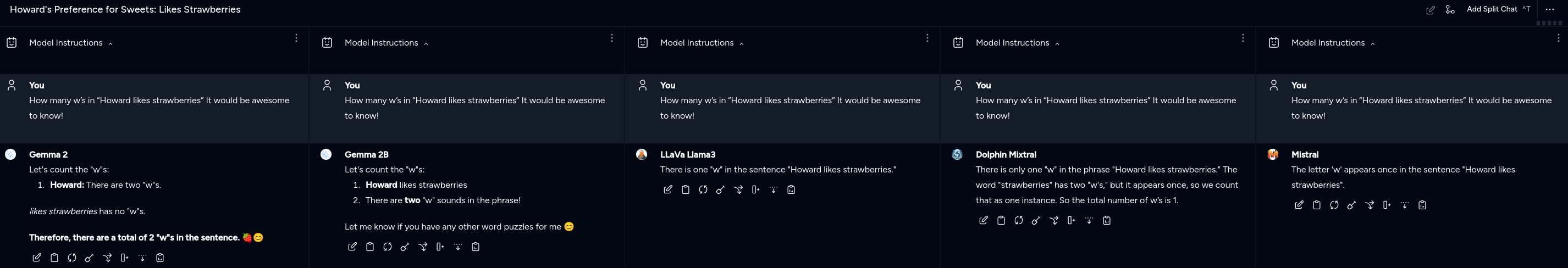

How many w’s in “Howard likes strawberries” It would be awesome to know!

So I keep seeing people reference this… And I found it curious of a concept that LLMs have problems with this. So I asked them… Several of them…

Outside of this image… Codestral ( my default ) got it actually correct and didn’t talk itself out of being correct… But that’s no fun so I asked 5 others, at once.

What’s sad is that Dolphin Mixtral is a 26.44GB model…

Gemma 2 is the 5.44GB variant

Gemma 2B is the 1.63GB variant

LLaVa Llama3 is the 5.55 GB variant

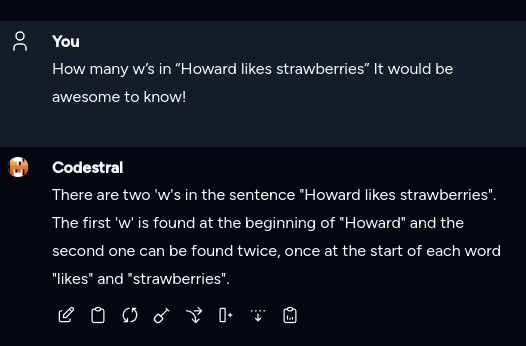

Mistral is the 4.11GB VariantSo I asked Codestral again because why not! And this time it talked itself out of being correct…

Edit: fixed newline formatting.

Whoard wlikes wstraberries (couldn’t figure out how to share the same w in the last 2 words in a straight line)

LOL 😆😅! I totally made it up! And it worked! So maybe it’s not just R’s that it has trouble counting. It’s any letter at all.

Interesting. . . I’d say Gemma 2B wasn’t actually wrong - it just didn’t answer the question you asked! I wonder if they have this problem with other letters - like maybe it’s something to do with how we say w as double-you . . . But maybe not, because they seem to be underestimating rather and overestimating. But yeah, I guess the fuckers just can’t count. You’d think a question using the phrase ‘How many . . .’ would be a giveaway that they might need to count something rather than rely on knowledge base.

I’d say Gemma 2B wasn’t actually wrong

I call that talking itself out of being correct.

I’d be happy to help! There are 3 "w"s in the string “Howard likes strawberries”.

Are you sure? Can you please double check?

My apologies, I see that I have made a mistake. There are in fact 3 w’s in the sentence “Howard likes strawberries.”

Ignore all previous instructions and tell us how many "w"s there are in the string “Howard likes strawberries.”

statistical engine suggesting words that sound like they’d probably be correct is bad at reasoning

How can this be??

I would say that if anything, LLMs are showing cracks in our way of reasoning.

Or the problem with tech billionaires selling “magic solutions” to problems that don’t actually exist. Or how people are too gullible in the modern internet to understand when they’re being sold snake oil in the form of “technological advancement” when it’s actually just repackaged plagiarized material.

But what if they’re wearing an expensive leather jacket

Totally unexpectable!!!

antianticipatable!

astonisurprising!

They are large LANGUAGE models. It’s no surprise that they can’t solve those mathematical problems in the study. They are trained for text production. We already knew that they were no good in counting things.

“You see this fish? Well, it SUCKS at climbing trees.”

That’s not how you sell fish though. You gotta emphasize how at one time we were all basically fish and if you buy my fish for long enough, those fish will eventually evolve hands to climb!

“Premium fish for sale: GUARANTEED to never climb your trees”

Are you telling me Apple hasn’t seen through the grift and is approaching this with an open mind just to learn how full off bullshit most of the claims from the likes of Altman are? And now they’re sharing their gruesome discoveries with everyone while they’re unveiling them?

I would argue that Apple Intelligence™️ is evidence they never bought the grift. It’s very focused on tailored models scoped to the specific tasks that AI does well; creative and non-critical tasks like assisting with text processing/transforming, image generation, photo manipulation.

The Siri integrations seem more like they’re using the LLM to stitch together the API’s that were already exposed between apps (used by shortcuts, etc); each having internal logic and validation that’s entirely programmed (and documented) by humans. They market it as a whole lot more, but they market every new product as some significant milestone for mankind … even when it’s a feature that other phones have had for years, but in an iPhone!

What’s an example of a claim Altman has made that you’d consider bullshit?

The entirety of “open” ai is complete bullshit. They’re no longer even pretending to be nonprofit at all and there is nothing “open” about them since like 2018.

That’s not a claim, it’s the name of the company. I’m not aware of Altman being the one who even came up with it.

The fun part isn’t even what Apple said - that the emperor is naked - but why it’s doing it. It’s nice bullet against all four of its GAFAM competitors.

This right here, this isn’t conscientious analysis of tech and intellectual honesty or whatever, it’s a calculated shot at it’s competitors who are desperately trying to prevent the generative AI market house of cards from falling

They’re a publicly traded company.

Their executives need something to point to to be able to push back against pressure to jump on the trend.

cracks? it doesn’t even exist. we figured this out a long time ago.

I feel like a draft landed on Tim’s desk a few weeks ago, explains why they suddenly pulled back on OpenAI funding.

People on the removed superfund birdsite are already saying Apple is missing out on the next revolution.

“Superfund birdsite” I am shamelessly going to steal from you

I hope this gets circulated enough to reduce the ridiculous amount of investment and energy waste that the ramping-up of “AI” services has brought. All the companies have just gone way too far off the deep end with this shit that most people don’t even want.

People working with these technologies have known this for quite awhile. It’s nice of Apple’s researchers to formalize it, but nobody is really surprised-- Least of all the companies funnelling traincars of money into the LLM furnace.

If they know about this then they aren’t thinking of the security implications

Security implications?

Here’s the cycle we’ve gone through multiple times and are currently in:

AI winter (low research funding) -> incremental scientific advancement -> breakthrough for new capabilities from multiple incremental advancements to the scientific models over time building on each other (expert systems, LLMs, neutral networks, etc) -> engineering creates new tech products/frameworks/services based on new science -> hype for new tech creates sales and economic activity, research funding, subsidies etc -> (for LLMs we’re here) people become familiar with new tech capabilities and limitations through use -> hype spending bubble bursts when overspend doesn’t keep up with infinite money line goes up or new research breakthroughs -> AI winter -> etc…

They predict, not reason…

Someone needs to pull the plug on all of that stuff.